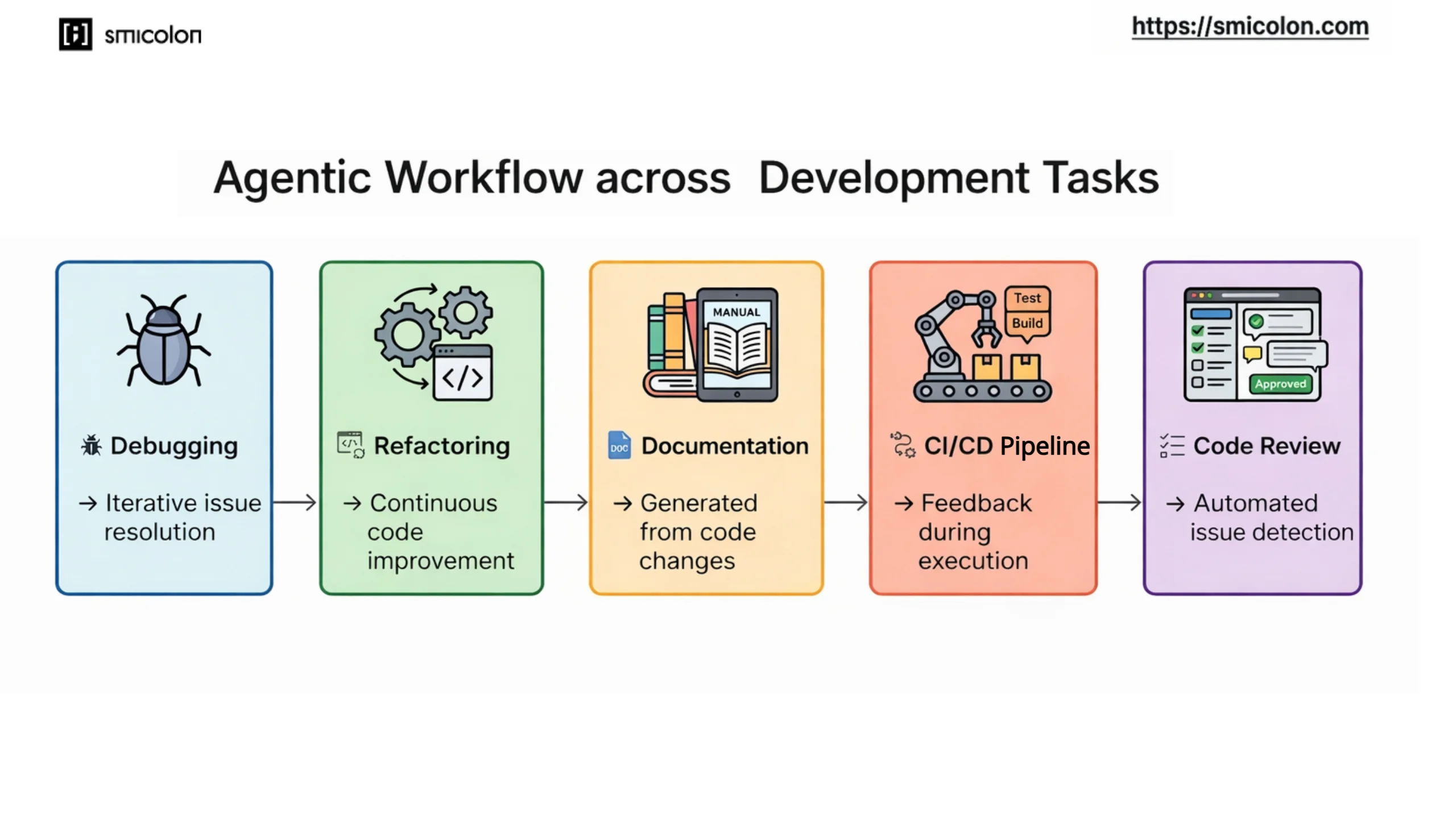

Automated Debugging Loops

One of the most immediate examples appears in debugging workflows. In this setup, failing tests can lead to an iterative debugging process where AI agents work through issues step by step, refining and validating fixes as the workflow progresses. This allows certain debugging tasks to progress faster than traditional manual investigation.

AI-Assisted Refactoring and Code Maintenance

Refactoring and long-term code maintenance are also becoming part of agentic AI workflows for maintaining large codebases. Instead of manually scanning repositories, AI agents can continuously review codebases and apply improvements over time as part of the workflow. This helps teams keep large systems maintainable as projects grow.

Automated Documentation

Documentation is another area where AI agent workflows reduce manual effort. Instead of maintaining documentation separately, AI agents can generate documentation directly from code changes as part of the workflow. This allows documentation to evolve alongside the codebase.

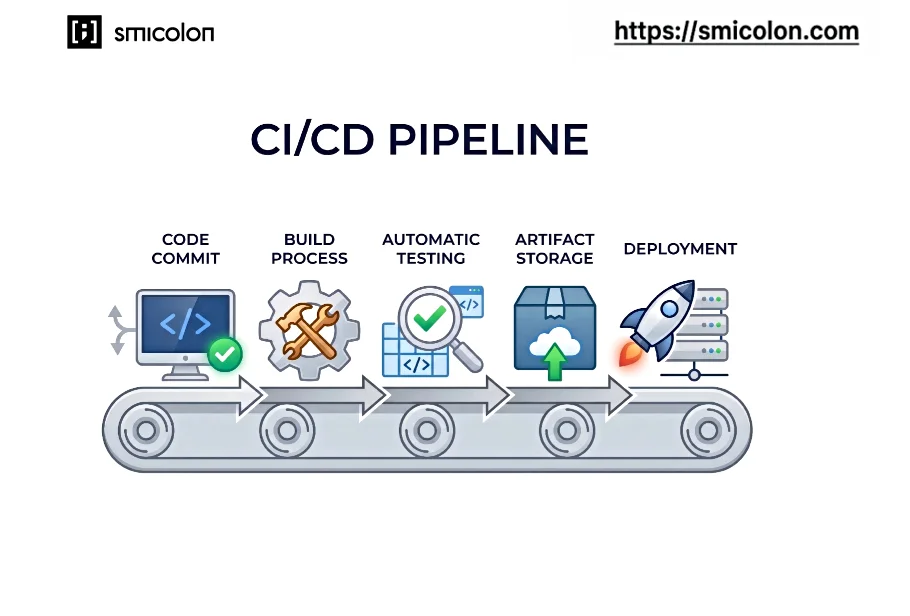

CI/CD Workflow Support

Agentic AI workflows can operate within continuous integration and deployment pipelines to analyse failures, validate changes, and improve release reliability. These pipelines already automate building, testing, and deployment. Within these workflows, AI agents add feedback and validation during execution, helping teams catch issues earlier in the pipeline.

Code Review Automation

Code review is another area where agentic workflow automation supports development teams. AI agents can review pull requests as part of the workflow and highlight issues early. This helps surface important issues earlier and reduces manual review effort.

Together, these sections show how agentic workflows support key development tasks across the software lifecycle. Now that these systems assist many development tasks, the next question is how companies are using them in real workflows.

How Companies Are Using Agentic Workflows in Real Systems

As organisations experiment with agentic AI workflows, many are beginning to integrate these workflows into real engineering systems. What started as experimental tools is gradually becoming part of real development environments. Instead of acting as standalone assistants, AI agents now operate across development, research, and operational environments as part of these systems, as seen in real-world engineering workflows highlighted by LangChain. This is especially visible in production systems, where teams manage complex codebases, continuous deployments, and large volumes of system data.

Codebase Analysis and Maintenance

Maintaining large codebases becomes harder as projects grow and dependencies change over time. Many companies are now using agentic AI workflows in large, evolving repositories where manual review is no longer scalable. Within these systems, AI agents continuously scan repositories, identify outdated dependencies, and suggest targeted improvements across the codebase.

This analysis can run continuously as code evolves, allowing teams to track changes and identify improvements over time. Tools such as Cursor enable large-scale codebase analysis across complex repositories, allowing teams to identify structural improvements more efficiently.

Automated Testing and Validation

Testing is another area where companies are applying AI agent workflows. Instead of relying only on predefined pipelines, AI agents in these workflows can run tests, analyse failures, and identify issues introduced by new code changes. This is particularly useful in active development environments where frequent updates introduce new risks.

Tools such as GitHub Copilot enable workflows where AI systems interpret failing tests and suggest possible fixes, allowing engineers to resolve issues faster while maintaining reliable testing practices.

Documentation and Knowledge Management

Engineering teams often spend significant time searching through documentation, design notes, and internal knowledge bases. In these workflows, AI agents can summarise documentation and extract key insights from internal systems. These workflows are often integrated into daily development work, especially in teams managing large internal documentation systems.

Models such as Claude support organising internal knowledge and generating summaries of technical material, making it easier for developers to locate relevant information during development work.

Infrastructure Monitoring and Operations

Agentic workflow automation is also used in operational systems, particularly in infrastructure monitoring, where AI agents analyse logs, detect unusual patterns in system metrics, and suggest possible causes when issues occur. They are especially valuable in systems that generate continuous streams of logs and performance data.

Systems such as AutoGPT enable monitoring workflows where agents analyse operational data and flag anomalies before they escalate into major incidents.

Incident Analysis and Response

When production alerts occur, engineering teams usually investigate logs, system metrics, and deployment histories to determine what went wrong. Agentic AI workflows can support this process by coordinating how AI agents analyse multiple sources of system data and identify likely causes of incidents.

By integrating this analysis into agentic workflows, companies can speed up incident triage and reduce the time engineers spend diagnosing routine alerts while still keeping humans in control of final decisions. This becomes critical in live production environments where response time directly impacts system reliability, making it important to understand how these workflows operate step by step through a real scenario.

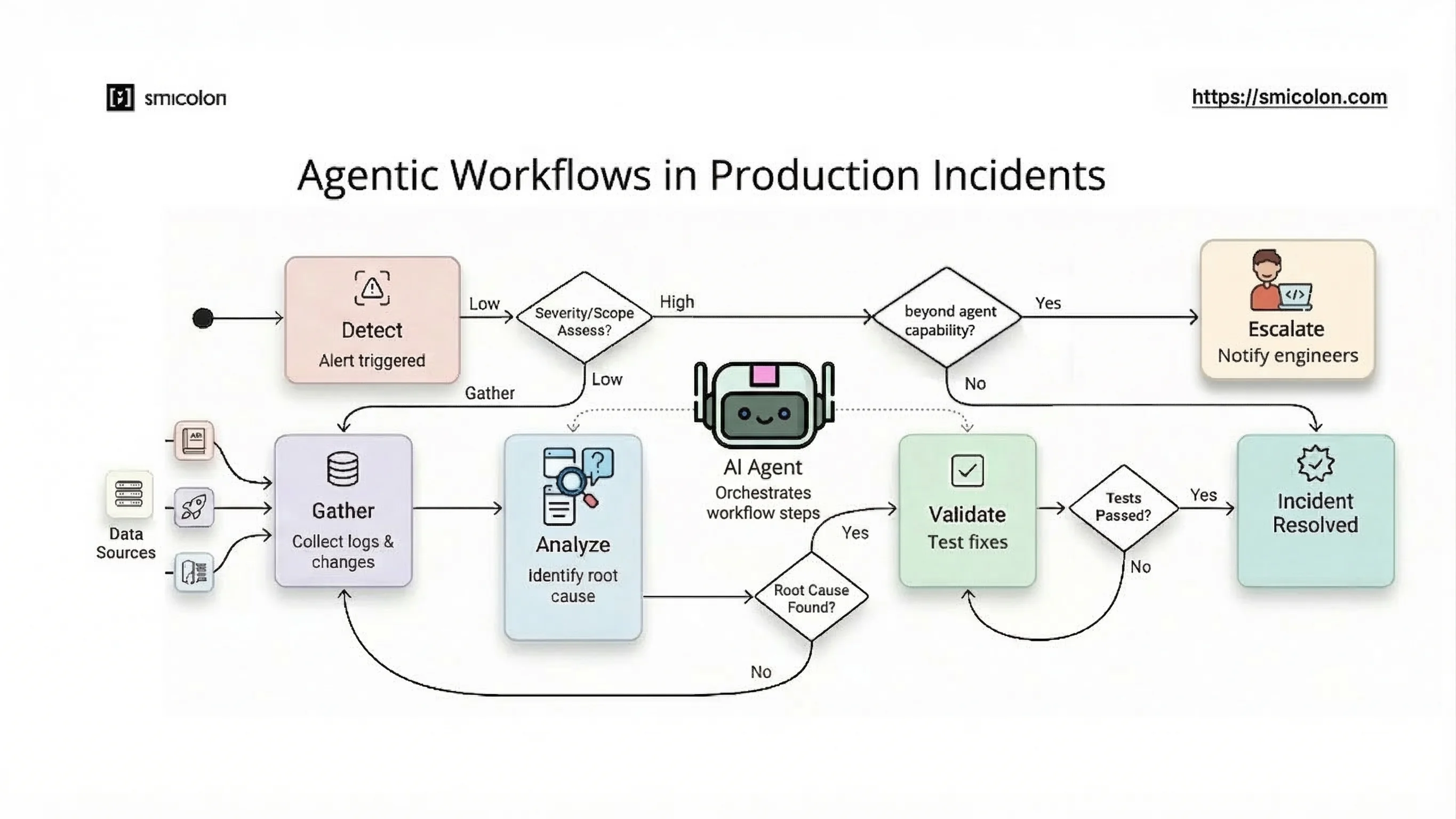

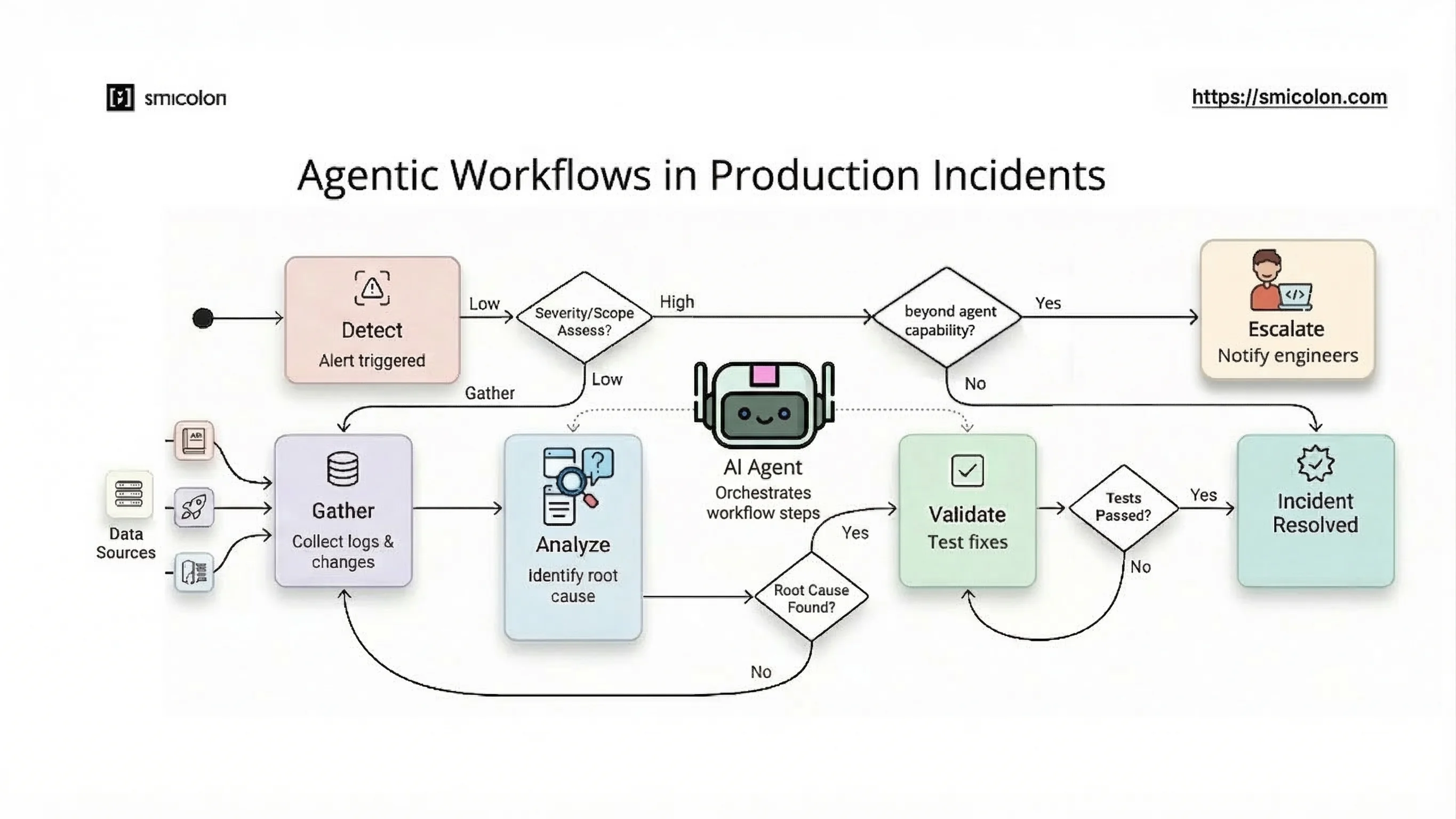

How an Agentic Workflow Operates in a Real Production Scenario

In a real production incident, an agentic workflow coordinates task flow across systems and data sources without manual tool switching. When a deployment triggers an alert, the workflow begins by identifying the affected service and gathering relevant system data. Instead of manually checking multiple systems, the workflow directs AI agents to investigate the issue across tools and data sources, moving across logs, deployments, and system metrics as the analysis progresses.

This process follows a series of steps to investigate and resolve the issue:

Step 1 — Detect the issue

The workflow picks up the alert and identifies the affected service.

Step 2 — Gather context

AI agents collect logs, recent deployment changes, and system metrics related to the issue.

Step 3 — Analyse possible causes

AI agents analyse patterns in logs and correlate them with recent code changes to identify likely root causes.

Step 4 — Suggest or test fixes

AI agents propose fixes or run validation steps, such as re-running tests or checking configurations.

Step 5 — Escalate if needed

If the issue is not resolved, the workflow surfaces findings to engineers with clear context, reducing the time required for investigation.

This is how work moves through an agentic workflow, with AI agents analysing data, testing fixes, and narrowing down the issue before escalation.

How Agentic Workflows Work (and How They Differ from Traditional Systems)

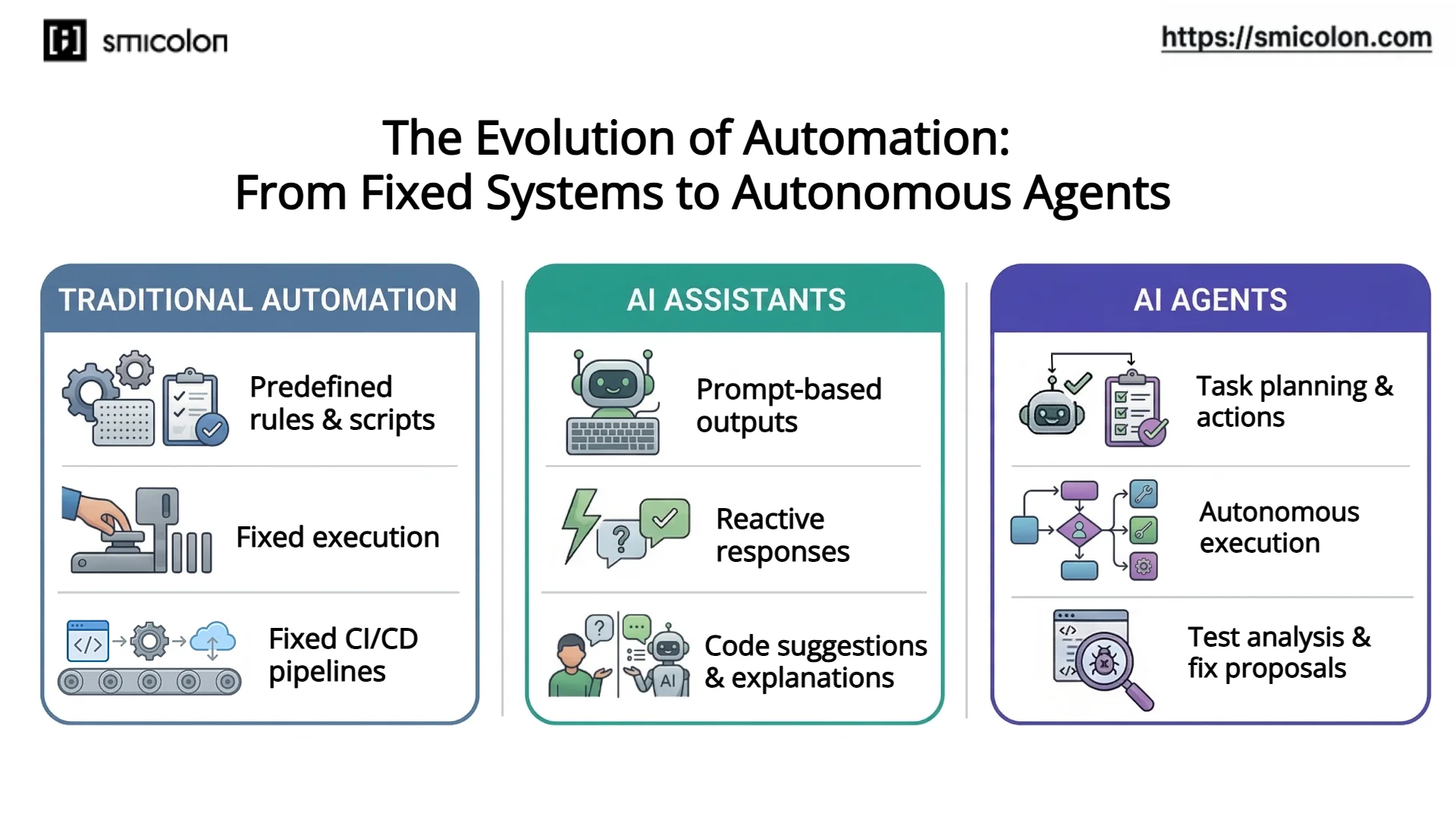

Agentic AI workflows change how software workflows are structured and executed. Instead of following fixed steps, they adapt based on goals, system state, and intermediate results. According to Anthropic, workflows follow predefined paths, while agentic systems dynamically decide how to act and use tools.

Traditional vs Agentic Workflows

Traditional workflows

- Follow fixed scripts or pipelines

- Steps are predefined and do not change

- Work well for predictable tasks

- Require manual intervention when something breaks

Agentic workflows

- Operate around a goal instead of fixed steps

- Plan actions, use tools, and adjust based on results

- Can handle changing conditions during execution

- Reduce the need for manual coordination

How Agentic Workflows Operate

A typical agentic workflow follows a simple loop:

Goal → Plan → Execute → Check → Repeat

Goal: Define the objective (for example, fix a failing test or update dependencies)

Plan: Decide what steps are needed

Execute: Use tools such as codebases, APIs, or pipelines

Check: Evaluate the result

Repeat: Continue until the goal is achieved

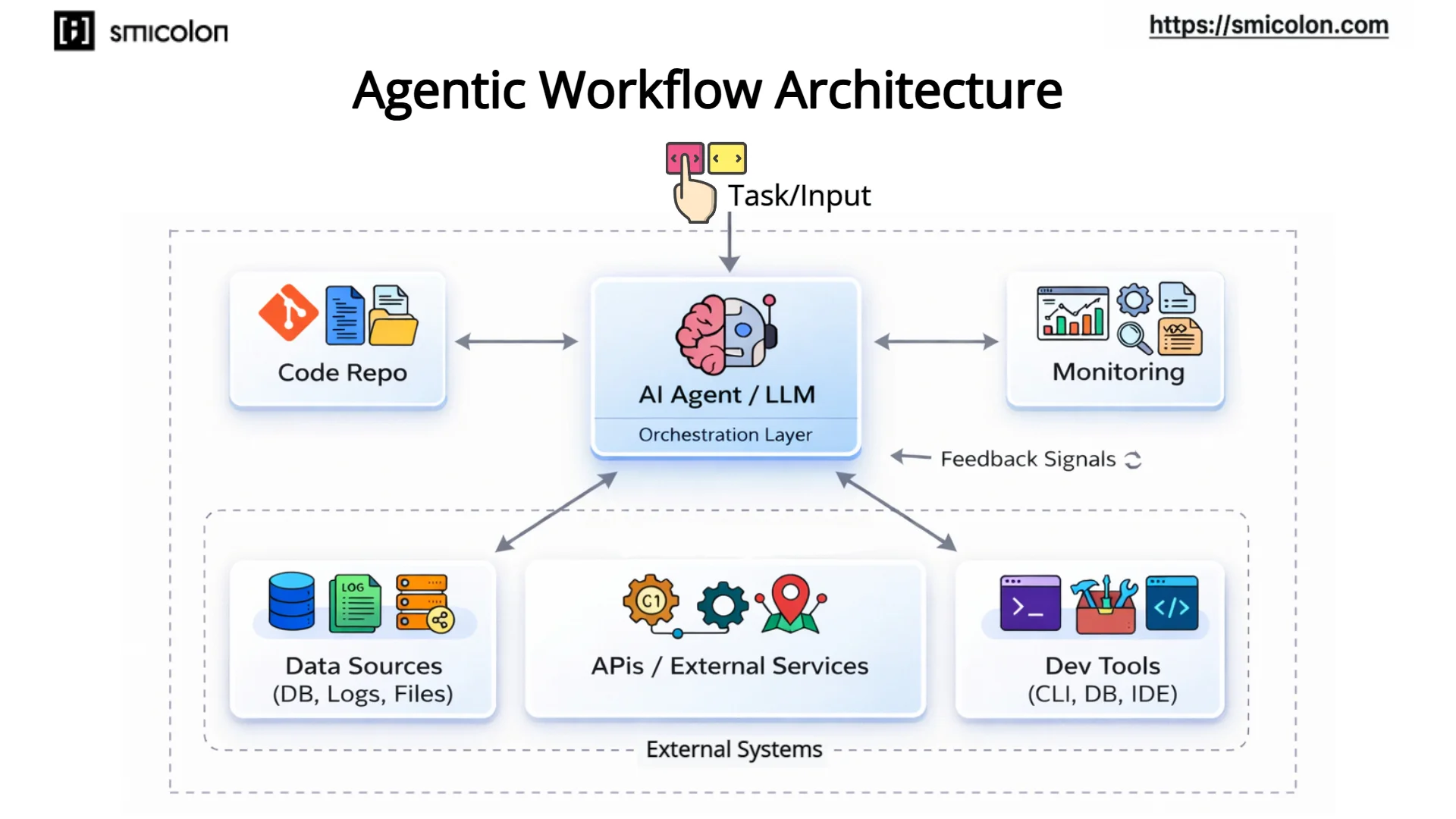

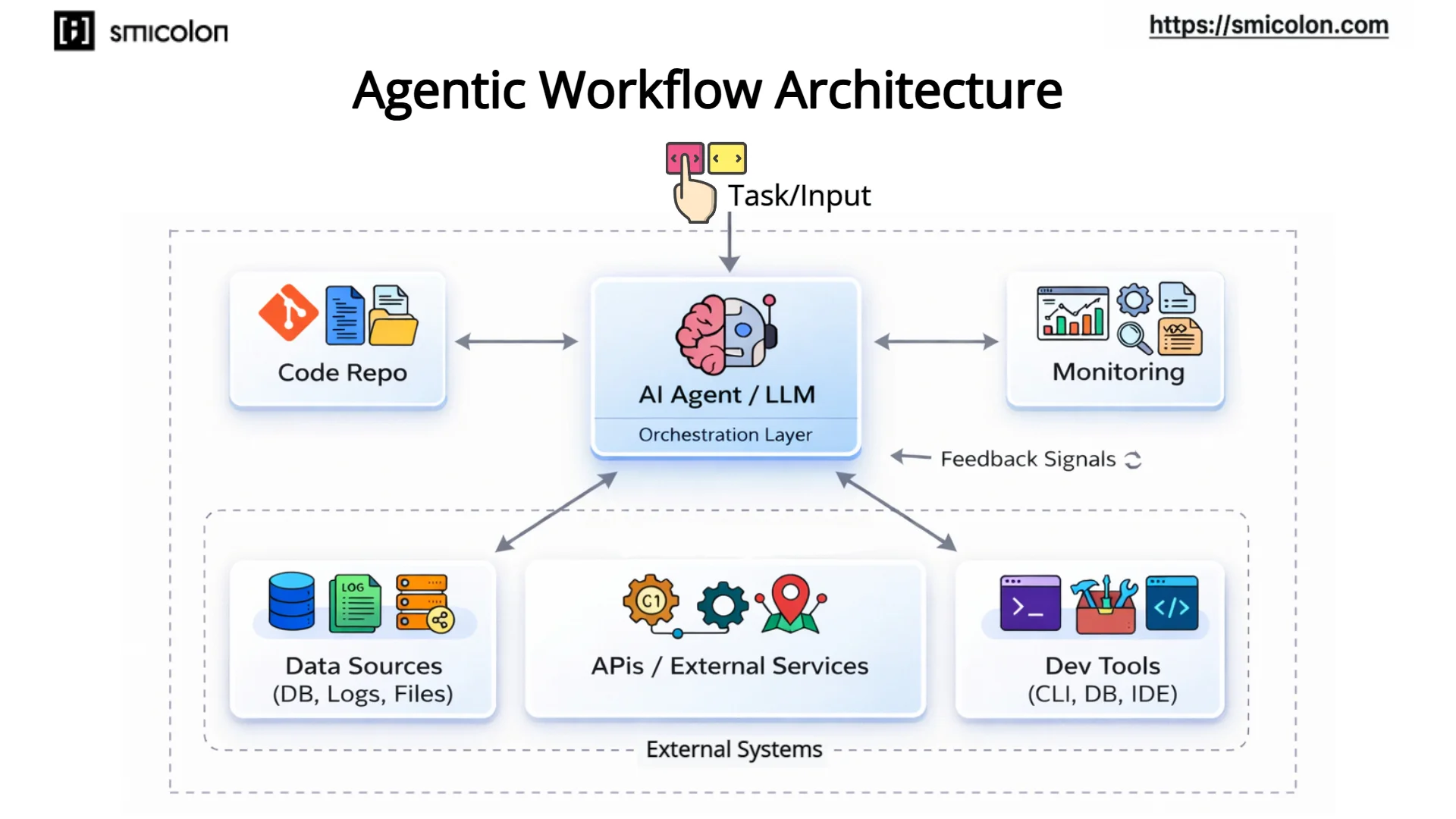

To understand how these workflows are structured in real systems, it helps to look at a typical agentic workflow architecture.

This architecture shows how an orchestration layer coordinates AI agents across tools, data sources, and external systems. Feedback from these systems allows the workflow to adapt and continue until the goal is achieved.

Core Components of Agentic Workflows

These workflows rely on a few core components:

Orchestration layer: Coordinates how tasks are planned and executed

Tool access: Connects the workflow to repositories, APIs, and systems

Feedback loop: Evaluates results and guides the next step

Control layer: Sets limits, permissions, and boundaries

Maintaining consistency across these workflows can be challenging, especially when AI-generated code does not follow team conventions. Open-source tooling such as Smicolon’s AI Kit helps address this by introducing reusable agents, skills, and conventions that guide how AI systems generate and structure code across environments.

This toolkit has been open-sourced with a set of skills and tools designed to help teams build agentic workflows faster and more effectively, ensuring that AI-written code consistently follows team conventions, project structure, and coding standards. The project can be adapted to different stacks and workflows, allowing teams to standardise how code generated by AI systems is created and maintained.

Can AI Agents Work Reliably in Production Systems?

As agentic workflows move into production environments, reliability becomes a key concern. Unlike traditional automation, these systems can make decisions during execution, which introduces new risks if not properly controlled. One of the main challenges is unpredictability, as AI agents may produce outputs that are incorrect, incomplete, or inconsistent with expected system behaviour. In production, even small errors can affect users or downstream services.

To manage this, teams introduce guardrails that limit what AI agents can do, such as restricting tool access, defining allowed actions, and setting boundaries on how changes are applied. At the same time, validation becomes essential. Instead of applying changes directly, agentic AI workflows include validation steps like automated tests, configuration checks, or human approval for critical actions.

Monitoring and observability also play a key role. Since these workflows operate across multiple systems, teams need visibility into actions, decisions, and system responses. Logs, traces, and workflow tracking help engineers diagnose issues when they occur. Even with these controls, production systems still rely on human oversight. AI agents can assist with execution, but engineers remain responsible for reviewing outcomes, especially in high-risk scenarios.

In practice, reliable use of AI agents in production depends less on the agents themselves and more on how workflows are designed, controlled, and monitored.

Opportunities and Challenges of Agentic Workflows

Agentic workflows introduce new ways to organise and execute development work, creating opportunities for teams to move faster and handle complexity more effectively. One of the most immediate benefits is faster experimentation, where tasks such as debugging, testing, and iteration can happen continuously within a workflow.

These systems also enable deeper workflow automation, reducing the need for manual coordination across tools and environments. As a result, teams can improve developer productivity by shifting effort away from repetitive tasks and toward higher-level problem solving. They also improve how knowledge is handled by making it easier to generate, access, and reuse technical information across systems.

At the same time, these workflows introduce new challenges that require careful engineering. Reliability remains a concern, as AI-driven decisions can still produce incorrect or inconsistent outcomes. Hallucinations can lead to misleading suggestions, especially when workflows rely on generated outputs without sufficient validation.

Cost unpredictability is another factor, particularly when workflows depend on repeated model interactions across multiple steps. Security risks also increase when agents interact with external systems, APIs, and sensitive data. Debugging also becomes more complex, as engineers must trace not only system behaviour but also the sequence of decisions made within a workflow. These challenges make it important to design workflows with clear boundaries, validation steps, and visibility from the start.

Conclusion

Agentic workflows are changing how development work is organised across modern software systems. Instead of handling tasks as isolated steps, they connect tools, data, and processes into a continuous flow where execution can adapt based on goals and system state. This shift builds on the growing role of AI agents, moving from simple assistance toward systems that can plan, execute, and iterate across development workflows.

Within these systems, AI agents handle execution through multiple stages without constant manual coordination. From supporting tasks like debugging, testing, and code maintenance to operating across systems such as repositories, pipelines, and monitoring tools, these workflows connect development tasks with real system execution. This makes it easier to manage complex systems where changes, data, and decisions are distributed across tools.

At the same time, these workflows operate differently from traditional automation. Instead of following fixed rules, they rely on goal-driven execution, where actions are planned, evaluated, and adjusted as the workflow progresses. This flexibility introduces new requirements around validation, observability, and control, especially in production environments where reliability becomes critical.

For engineering teams, this creates a more structured way to handle development work while reducing repetitive effort. However, it also requires careful handling of reliability, cost, security, and debugging complexity. The long-term value of agentic AI workflows will depend on how well they are designed, monitored, and integrated into real systems with clear boundaries and strong engineering practices.